Meet LiteLLM Agent Platform: A Kubernetes-Based, Self-Hosted Infrastructure Layer for Isolated Agent Sandbo…

What changed

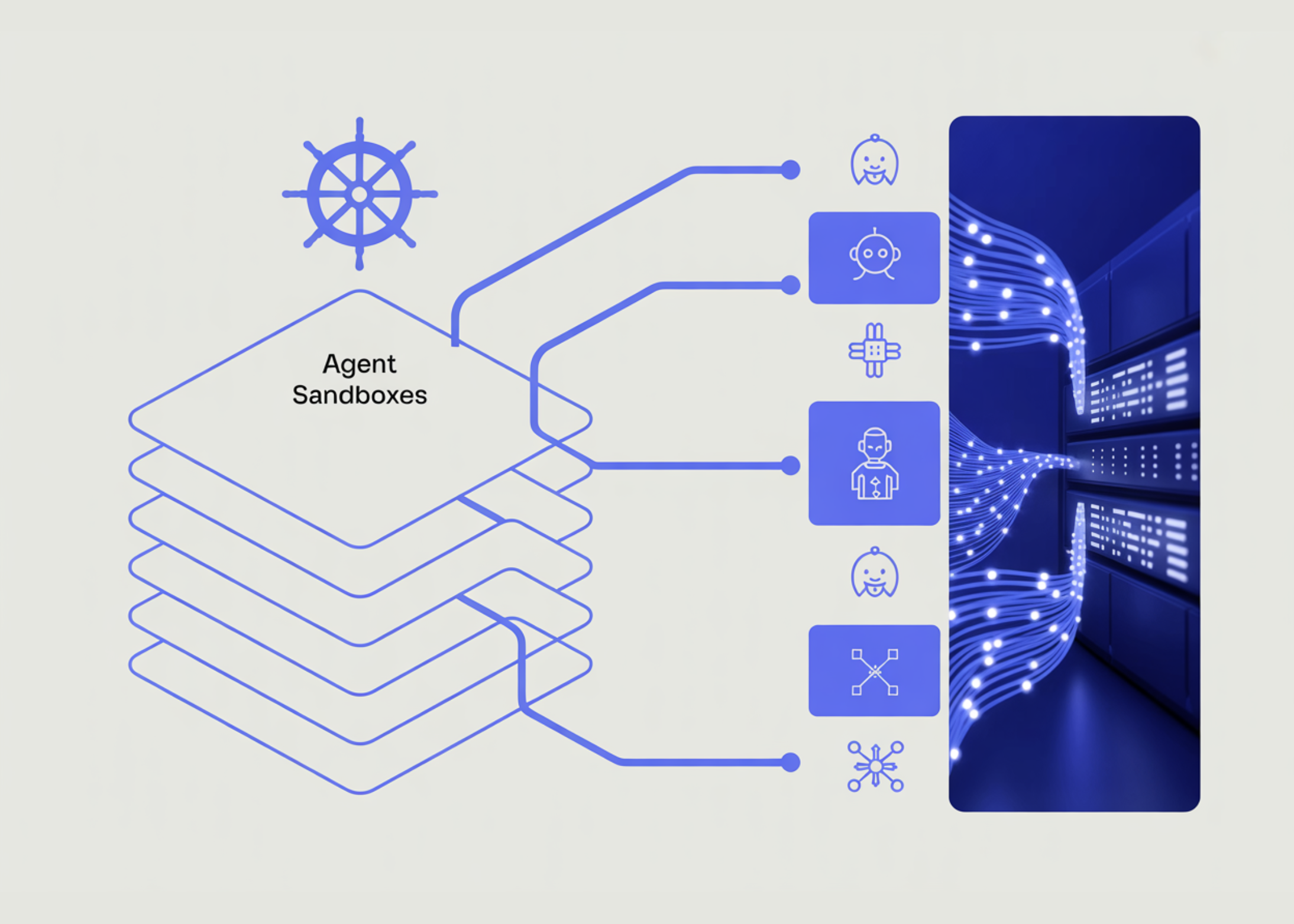

BerriAI, the team behind LiteLLM AI Gateway, has open-sourced LiteLLM Agent Platform. This platform is designed to run AI agents in production with a focus on isolated sandboxes per agent and persistent session management. It operates on Kubernetes, making it suited for scalable, self-hosted environments where agents need to maintain context and state across restarts and usage by multiple teams.

Why builders should care

Running AI agents locally or in a single script is straightforward, but production requirements add complexity. Production setups demand environment isolation to avoid agent interference and secure resource management. Persistent session handling ensures agents don’t lose context, which is critical for workflows requiring long-running state or multi-step interactions. LiteLLM Agent Platform addresses these gaps with a Kubernetes-based service layer, a relevant approach for builders wanting to avoid vendor lock-in and maintain operational control.

The practical takeaway

LiteLLM Agent Platform brings scalability and reliability to AI agent deployment. Builders gain self-hosted infrastructure that isolates each agent’s execution environment, reduces cross-contamination risks, and keeps session history alive even after service interruptions. This reduces operational friction for teams distributing AI workloads and collaborating on agent-based automation without relying on cloud providers. Kubernetes integration means it fits existing containerized workflows, making agent deployment and management a smoother experience in production.

What to watch next

Watch for adoption signals among organizations running AI agents at scale, especially those wary of cloud dependency or needing fine-grained operational control. Updates to support newer AI models, orchestration enhancements, or integrations with popular agent frameworks could make LiteLLM Agent Platform more attractive. Competitors offering hosted agent management may respond, so seeing how LiteLLM balances ease of use with self-hosted complexity will be key.

AI Quick Briefs Editorial Desk