Zyphra Releases ZAYA1-8B-Diffusion-Preview: The First MoE Diffusion Model Converted From an Autoregressive …

What happened

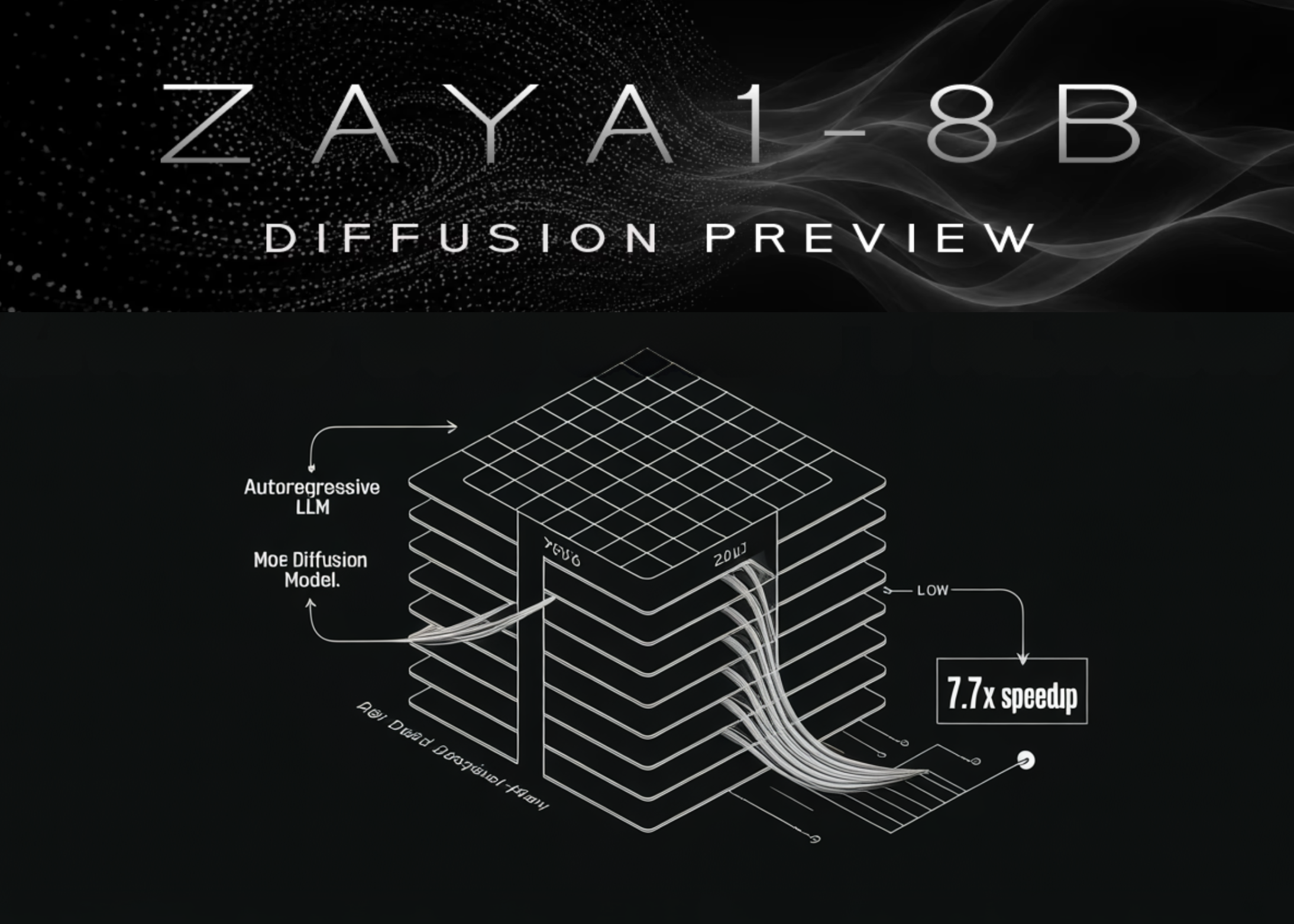

Zyphra launched ZAYA1-8B-Diffusion-Preview, the first Mixture-of-Experts (MoE) diffusion model converted from an autoregressive large language model (LLM). This model competes directly with its original autoregressive form on evaluation performance but delivers up to 7.7 times faster inference. The speed boost comes by replacing the traditional autoregressive decoding, which is limited by GPU memory bandwidth, with a discrete diffusion decoding process that is compute-bound. This approach leverages the trend of GPU FLOPs scaling faster than memory bandwidth.

Why it matters

Speed matters for real-world AI deployments where latency and throughput drive costs and user experience. Moving from autoregressive to diffusion-based decoding without a drop in quality means Zyphra can offer faster responses while reducing GPU memory bandwidth pressure. For operators, this shift could lower cloud expenses or enable more predictions per GPU, critical as GPUs struggle to keep up with growing model sizes and complexity. It also challenges the view that autoregressive decoding is the gold standard for MoE LLMs by demonstrating diffusion can match performance with a significant speed advantage.

What to watch next

Check if this conversion approach scales efficiently beyond the 8 billion parameter level and if Zyphra or others release similar MoE diffusion models at larger sizes. Watch for adoption signals, especially from teams running large-scale inference pipelines sensitive to latency and cost. Also observe if cloud providers and hardware vendors adjust optimizations to favor diffusion decoding workflows that shift bottlenecks from memory bandwidth to raw compute. The result could reshape LLM architecture and inference economics in the near term.

AI Quick Briefs Editorial Desk