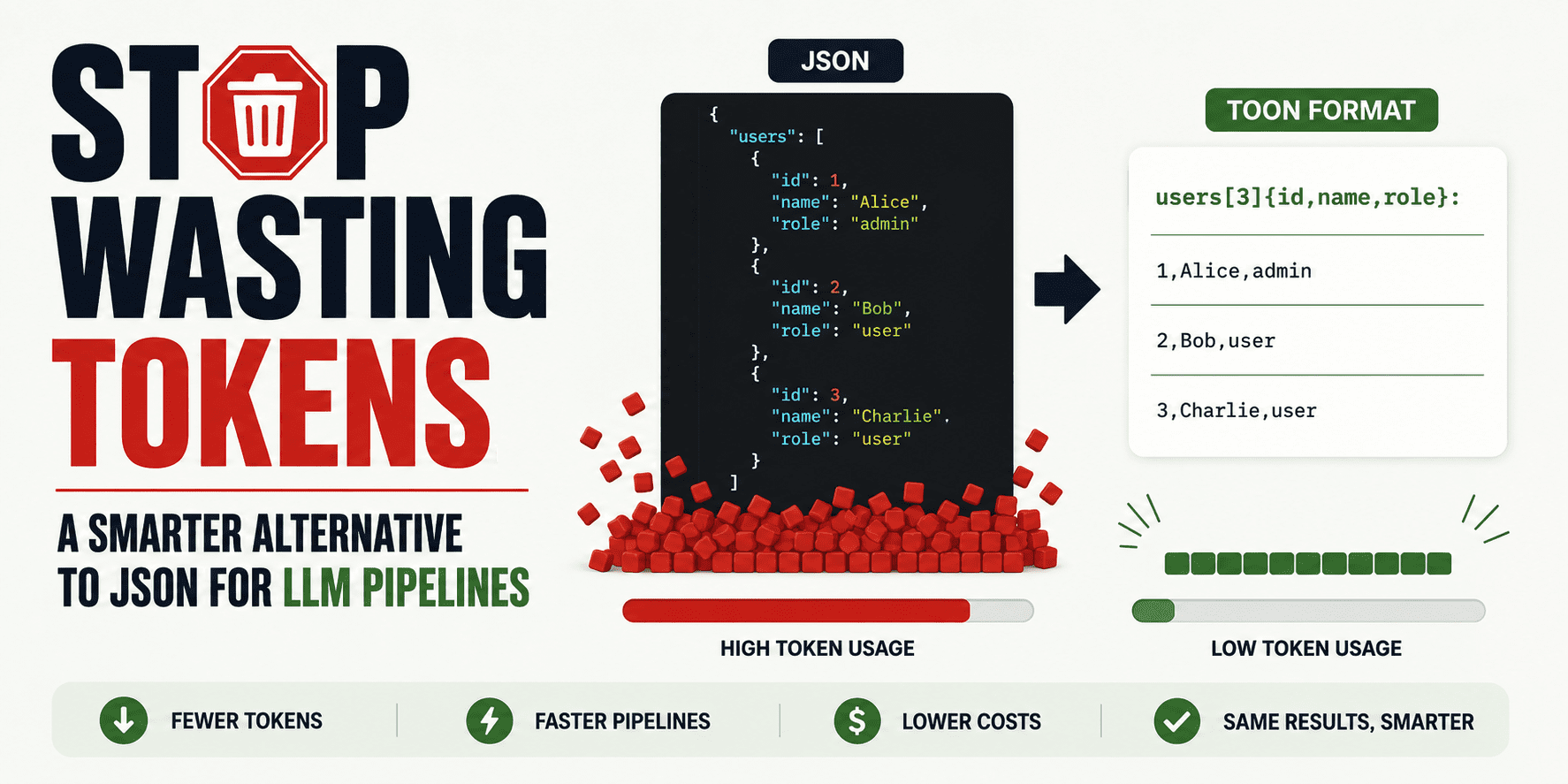

Stop Wasting Tokens: A Smarter Alternative to JSON for LLM Pipelines

Many developers and data scientists working with large language models (LLMs) are paying more than they should in token costs by feeding data in JSON format. This article highlights how JSON, a common way to structure data, can lead to inefficient use of tokens when used in LLM pipelines. The article introduces smarter alternatives that reduce token waste and save money while maintaining or improving data clarity for these models.

This matters because token usage directly affects the cost and speed of using LLMs, especially for businesses dealing with large volumes of data. When structured data is wrapped in JSON, it often includes redundant formatting characters and nested structures that do not add meaningful information but still consume tokens. Cutting down unnecessary tokens can lower expenses, improve response times, and enable developers to include more valuable data in their prompts or completions without hitting length limits.

The root of the problem is that LLMs consume tokens based on every character or piece they see, including brackets, quotes, and punctuation used by JSON. While JSON is great for human readability and software interoperability, it is not optimized for LLM token efficiency. As interest and investment in LLM applications grow, it has become clear that developers need more concise, LLM-friendly formats or encoding schemes that retain the structure without bloating token usage. Some alternatives use simplified notation, delimiter-based formats, or custom schemas designed with token economy in mind.

This shift signals a growing awareness of the practical constraints and costs of working with LLMs beyond just model accuracy or capability. Developers and businesses should pay closer attention to the input formats they use and experiment with alternatives that maintain data integrity but reduce token counts. The next step will likely include wider adoption of these efficient data representations, toolkits to transform JSON to leaner formats automatically, and possibly input compression methods specifically tailored to language models. Watching how these changes affect LLM adoption costs and application scalability will be important for anyone working with or depending on these models.

— AI Quick Briefs Editorial Desk