LightSeek Foundation Releases TokenSpeed, an Open-Source LLM Inference Engine Targeting TensorRT-LLM-Level …

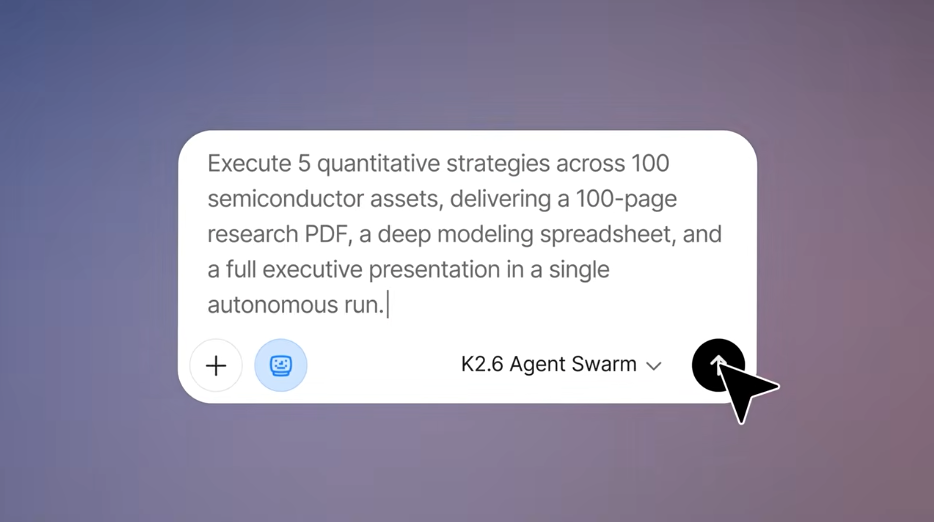

The LightSeek Foundation has released TokenSpeed, a new open-source inference engine for large language models (LLMs) designed to deliver performance comparable to NVIDIA’s TensorRT-LLM. TokenSpeed focuses on boosting the speed and efficiency of LLM inference, which is the process of running these AI models to generate responses or outputs. This release targets workloads typical in agentic coding systems like Claude Code, Codex, and Cursor, where continuous and complex interactions with code and software development tasks demand fast and efficient computation.

This development addresses a critical bottleneck in AI deployment: inference efficiency. As AI tools move beyond isolated use cases and become fundamental components of software development infrastructure, the speed at which LLMs can process and respond grows more important. Faster inference engines mean that developers and businesses can get more immediate, reliable outputs from AI systems, making these tools more practical for real-world applications like coding assistance, automated problem-solving, and even broader enterprise workflows. By matching the performance of the well-regarded TensorRT-LLM, TokenSpeed offers an alternative that is open, transparent, and potentially more accessible to the community.

The need for this kind of engine comes from the rising demand for agentic AI systems—those that can act autonomously or semi-autonomously in complex environments. These systems require quick, repeated AI model evaluations to maintain fluid interactions and effective decision-making. Traditionally, high-performance inference required proprietary or closed solutions tied to specific hardware or vendor ecosystems. TokenSpeed changes this by offering an open-source option that can integrate with existing tooling, potentially lowering barriers to entry and fostering innovation in AI deployment.

What makes TokenSpeed especially interesting is its focus on achieving high throughput and low latency while working with popular model frameworks. This suggests LightSeek Foundation is pushing toward democratizing advanced AI infrastructure and supporting a wider range of use cases beyond the traditional settings that major cloud providers dominate. Looking ahead, this could encourage more experimentation in agentic AI projects, accelerate the deployment of AI-enhanced development tools, and pressure other inference platforms to open up or improve their efficiency.

For developers and organizations planning to adopt or build on language model technology, TokenSpeed’s release is a signal to watch the evolving landscape of AI infrastructure more closely. Open-source tools with competitive performance invite broader collaboration and might speed up how quickly powerful AI systems become embedded in everyday software workflows. This change also hints at a shift from purely hardware-dependent performance gains toward smarter software optimizations accessible to the wider community.

— AI Quick Briefs Editorial Desk