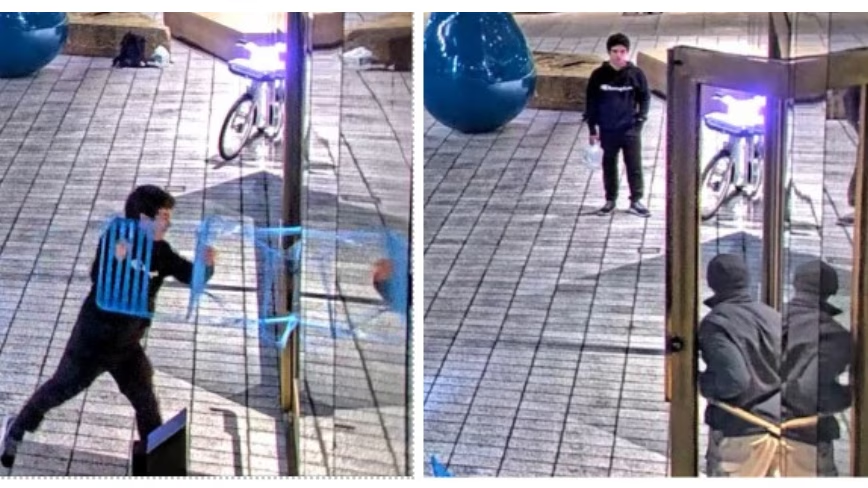

He carried a kill list of AI CEOs and a jug of kerosene. His lawyer called it a property crime. The charges…

Daniel Moreno-Gama, a 20-year-old man, has pleaded not guilty to multiple charges, including two counts of attempted murder, after attacking the San Francisco home of OpenAI CEO Sam Altman with a Molotov cocktail. After the incident, Moreno-Gama reportedly walked several miles to OpenAI’s headquarters, threatening to set the building on fire. Despite the severity of these alleged acts, Moreno-Gama’s lawyer has described the case as a property crime, which contrasts sharply with the potential punishment he faces, as the charges could lead to life imprisonment.

This case raises important concerns about security and the growing hostility directed toward leaders in artificial intelligence. As AI technologies become more influential and touch more aspects of daily life, figures like Sam Altman have become prominent targets for those who feel threatened or upset by AI’s rapid development. Attacks of this nature underline the risk of violence fueled by the anxiety and political tensions surrounding AI innovation. They also highlight the necessity for AI organizations to think seriously about how to protect their leaders and employees from physical threats as the technology continues to evolve.

The background to this incident ties into the broader backlash against AI progress. Many people feel uneasy about the power and potential impacts of AI systems, which can sometimes appear to move too fast without enough public oversight or ethical consideration. Concerns about AI include job displacement, loss of privacy, misinformation, and the risk of autonomous systems behaving unpredictably. This tension has fueled protests, policy debates, and, unfortunately, acts of aggression, with this attack being one of the most extreme examples. It reflects how AI’s societal challenges are no longer abstract debates but are manifesting in real-world conflicts.

Moreno-Gama’s plea and the charges against him could serve as a warning to those contemplating violent acts tied to technological backlash. It also opens a discussion about how society should address and manage dissent related to AI development. The case might push tech companies to implement stronger security measures and collaborate more closely with law enforcement. Additionally, the legal arguments presented could define how acts of violence related to AI conflicts are treated in courts. Everyone involved in AI, from developers to policymakers, should watch this case carefully, as it signals a new phase where social and ethical tensions around AI are escalating beyond conversation to criminal acts.

— AI Quick Briefs Editorial Desk